Data Mining and Machine Learning

John Samuel

CPE Lyon

Year: 2025-2026

Email: john.samuel@cpe.fr

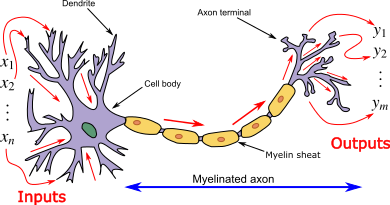

Neurons are organized into layers. There are generally three types of layers in a neural network:

The overall goal of training is to adjust the weights of the network so that it can generalize to new data, producing accurate results for examples it has not seen during training.

Activation Function: After computing the neuron's input, it is passed through an activation function. This function introduces nonlinearity into the model, allowing the neural network to capture complex relationships and learn nonlinear patterns. Some commonly used activation functions include:

The perceptron is a supervised learning algorithm used for binary classification. It is designed to solve problems where the objective is to determine whether a given input belongs to a particular class or not.

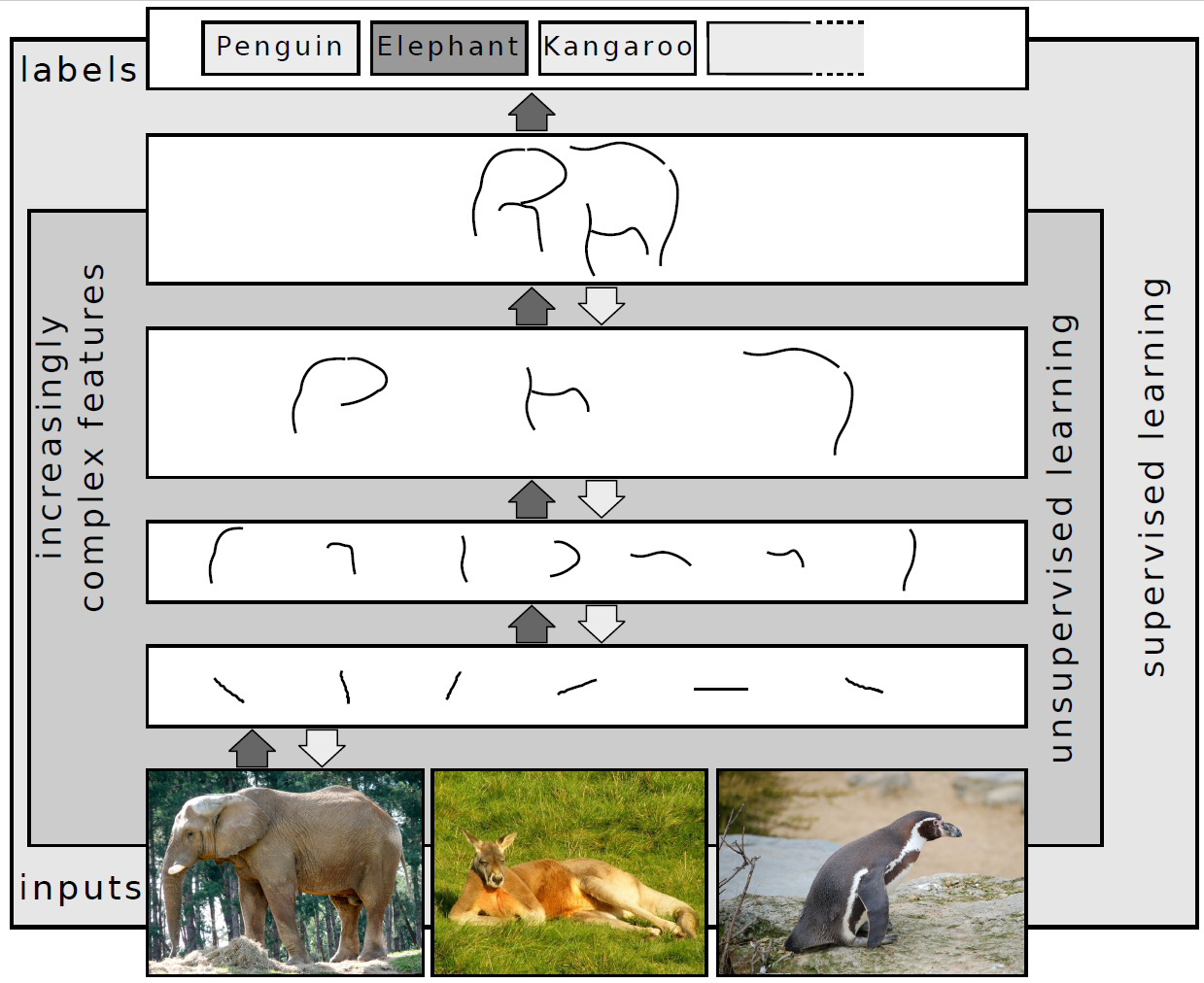

A deep neural network, also known as a deeply hierarchical neural network or deep neural network (DNN), is a type of artificial neural network that includes multiple processing layers, generally more than two. These networks are called "deep" because of their stacked layer architecture, enabling the creation of complex hierarchical representations of data.

Layered architecture: Deep neural networks are composed of multiple layers, generally divided into three main types:

Training deep neural networks may require large volumes of data and computing power.

# Step 3: Add a dense output layer with softmax activation function

# The layer has 2 neurons for a binary classification task, and softmax is used

# to obtain probabilities

model.add(Dense(units=2, activation='softmax'))

# Step 4: Compile the model

# Using stochastic gradient descent (SGD) as optimizer with a learning rate of 0.01

# The loss function is 'mean_squared_error' for a regression problem

# Model performance will be measured in terms of 'accuracy'

sgd = SGD(lr=0.01)

model.compile(loss='mean_squared_error', optimizer=sgd, metrics=['accuracy'])

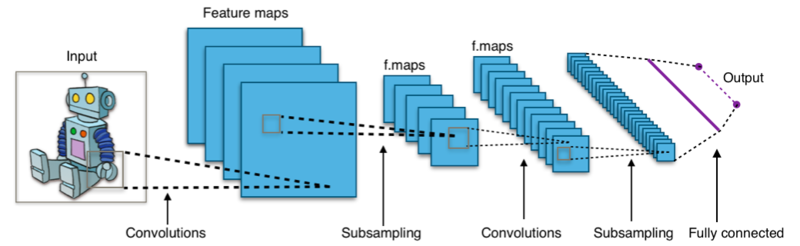

Convolutional neural networks (CNNs) are a class of neural network architectures designed primarily for image analysis. They have been particularly effective in tasks such as image classification, object detection, and image segmentation.

In summary, CNNs follow a hierarchical architecture, where convolutional layers learn local features, and these features are then combined in subsequent layers to form more complex representations. The nonlinearity introduced by the ReLU activation function is crucial to allow the model to learn nonlinear relationships in the data.

A kernel in the context of image processing, also called a filter or mask, is a small matrix that is applied to an image using a convolution operation. The purpose of applying these kernels is to perform various filtering operations on the image, such as edge detection, detail enhancement, highlighting certain features, etc.

Scientific datasets come in fundamentally different structural forms. The choice of architecture depends on the data modality.

Three main modalities in scientific contexts:

Note: This section is designated as asynchronous reading material.

Convolutional Neural Network (CNN): processes 2D grids using learned convolution filters. Key operations:

Types of uncertainty:

Distribution shift: training and test data come from different distributions. Common in physics experiments (simulation ≠ real data).

Types:

Strategies: