Data Science for Chemists

IPL Summer School, CPE Lyon

4. Data Mining

John Samuel

CPE Lyon

Year: 2023-2024

Email: john.samuel@cpe.fr

.jpg)

.png)

Feature construction is an essential step in the data preprocessing pipeline in machine learning, as it can help make data more informative for learning algorithms.

Let

Then

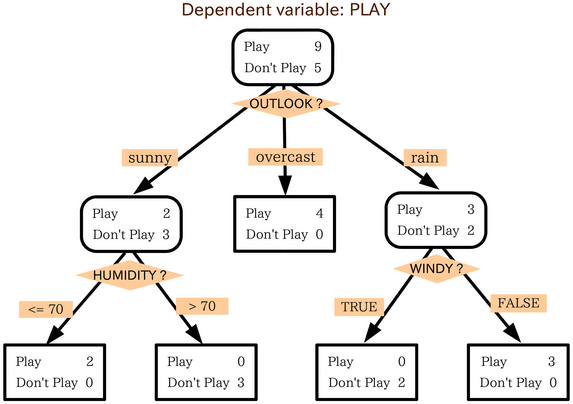

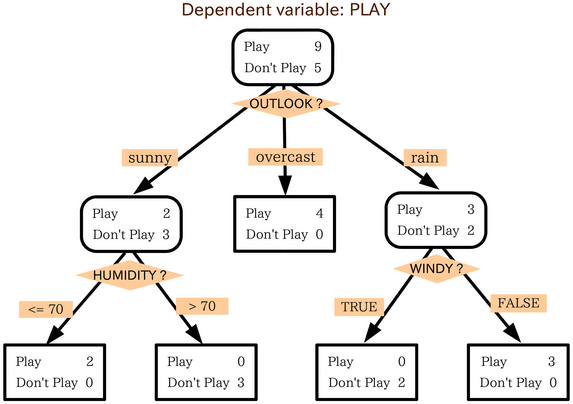

Decision trees are a powerful decision support tool that uses a tree-like model to represent decisions and their possible consequences.

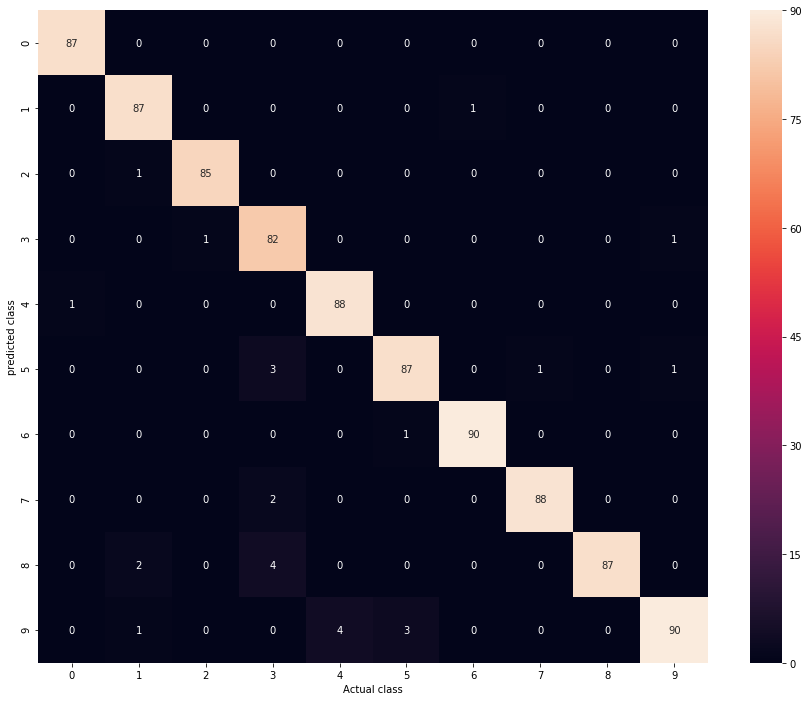

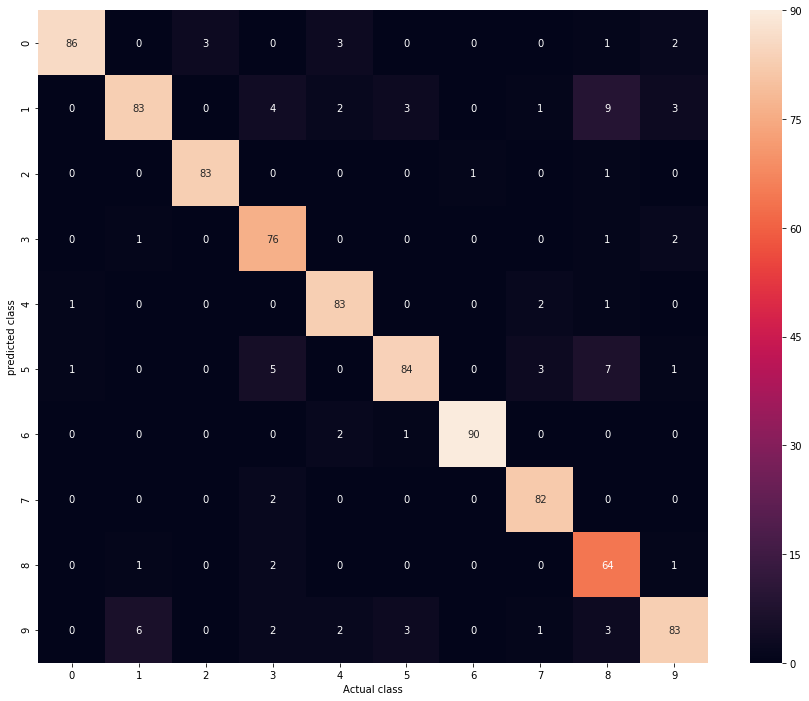

Ensemble learning, particularly decision tree forests, is a technique that combines multiple learning models to improve predictive performance compared to a single model. Decision tree forests are obtained by constructing multiple decision trees during the training phase.

Feature selection is a process aimed at choosing a subset of relevant features from a large number of available features.